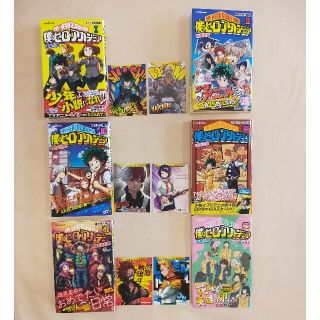

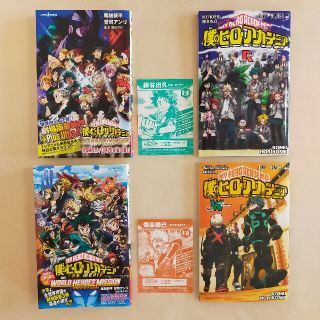

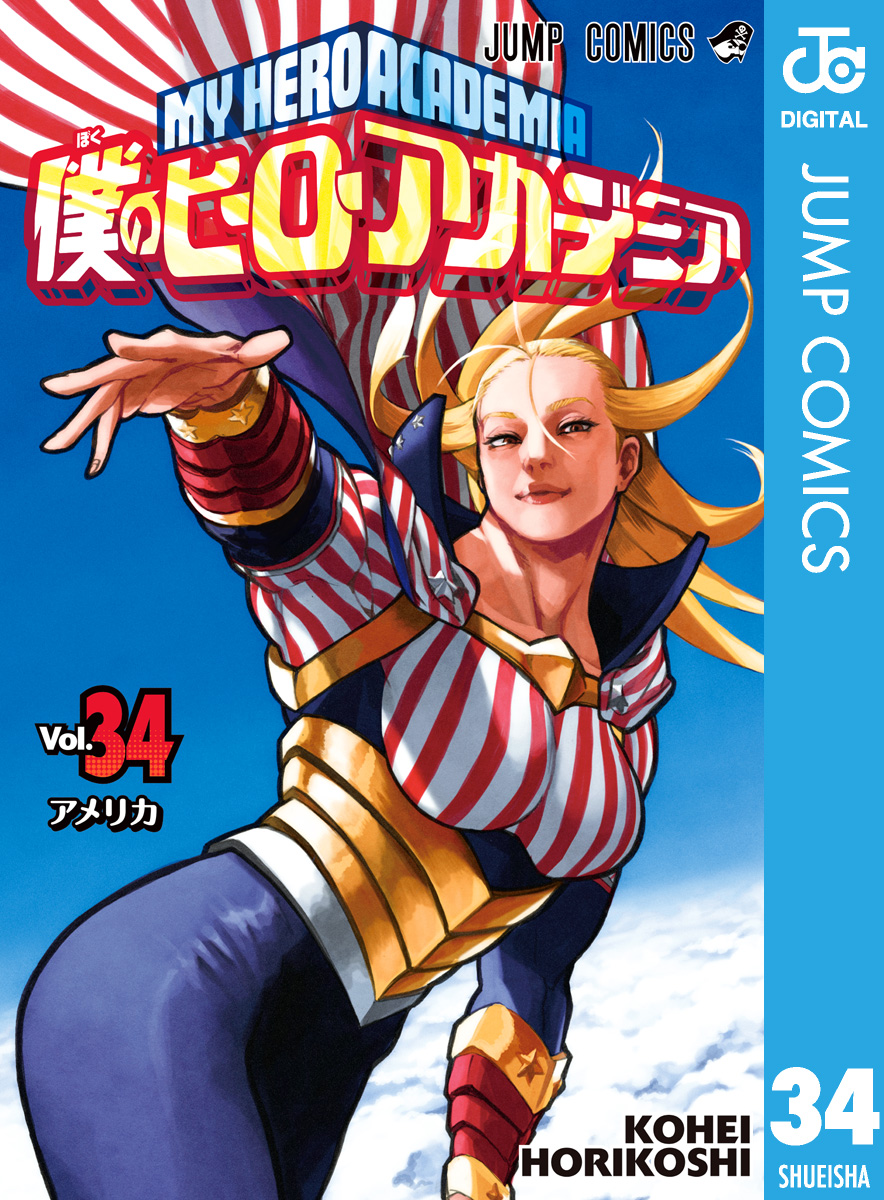

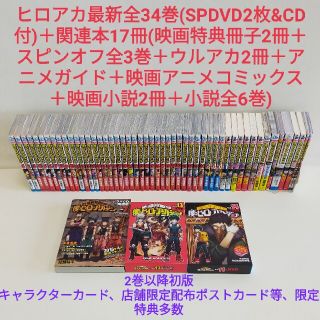

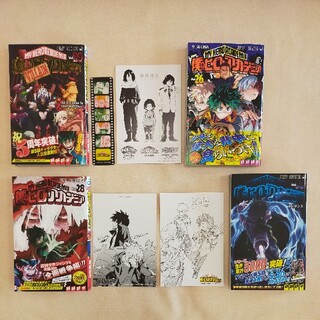

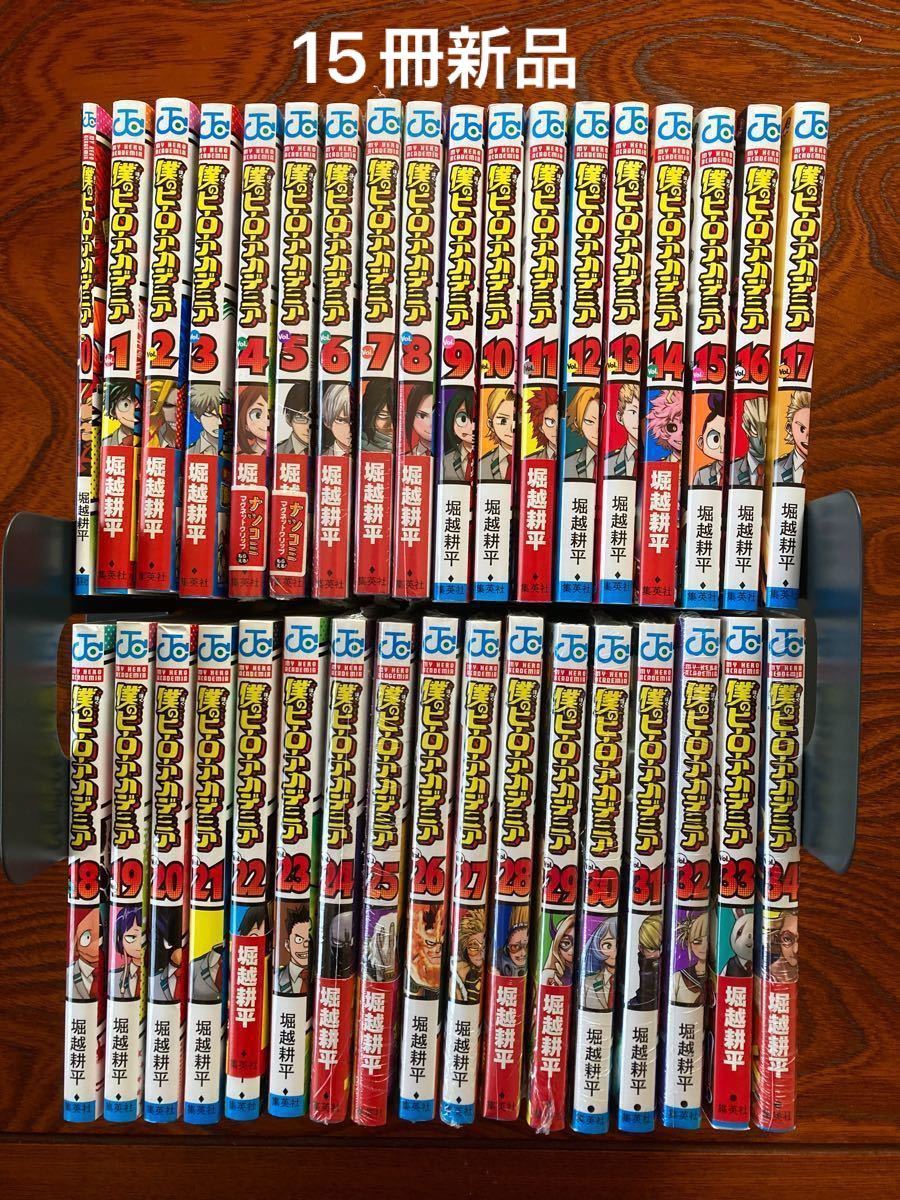

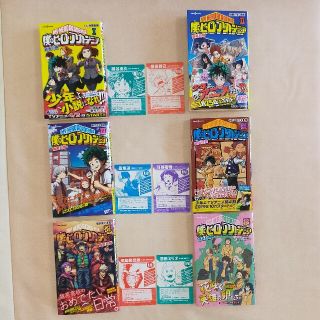

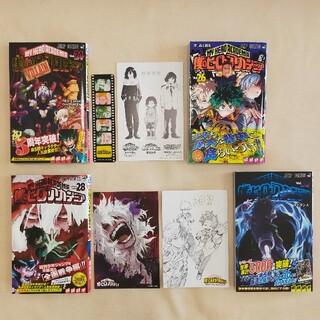

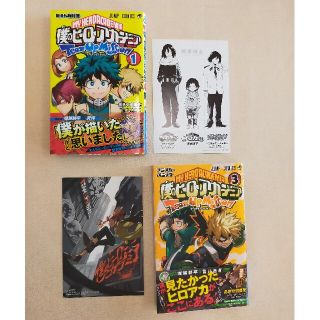

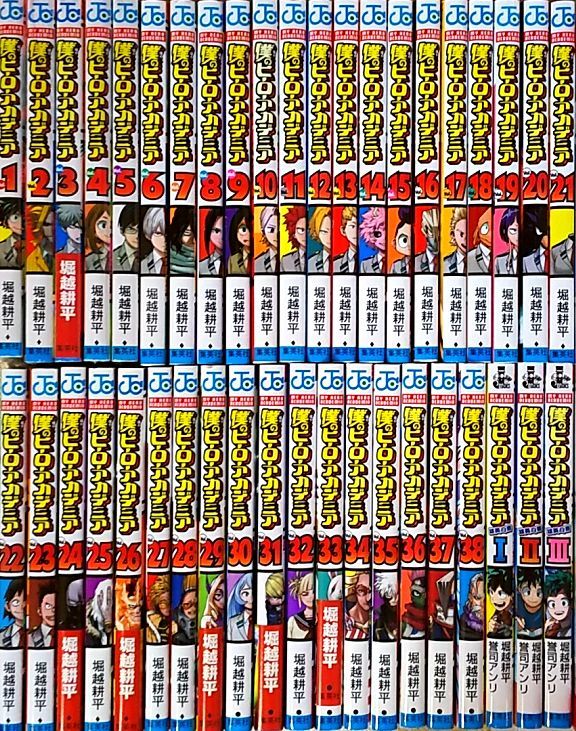

僕のヒーローアカデミア1〜34巻+関連本7冊

(税込) 送料込み

商品の説明

前半1度読み、後半は読まずに保管していました。

綺麗です。

全て新品購入したものです。

中古品ご理解頂ける方宜しくお願い致します。

返品、返金お受けできません。

僕のヒーローアカデミア

ヒロアカ

緑屋出久

爆豪勝己

轟焦凍商品の情報

| カテゴリー | 本・音楽・ゲーム > 漫画 > 少年漫画 |

|---|---|

| 商品の状態 | 未使用に近い |

僕のヒーローアカデミア1〜34巻+関連本7冊 | tradexautomotive.com

僕のヒーローアカデミア1〜34巻+関連本7冊 | tradexautomotive.com

僕のヒーローアカデミア1〜34巻+関連本7冊 | tradexautomotive.com

僕のヒーローアカデミア1〜34巻+関連本7冊 | tradexautomotive.com

僕のヒーローアカデミア1〜34巻+関連本7冊 | tradexautomotive.com

僕のヒーローアカデミア1〜34巻+関連本7冊 | tradexautomotive.com

僕のヒーローアカデミア1〜34巻+関連本7冊 | tradexautomotive.com

僕のヒーローアカデミア1〜34巻+関連本7冊 | tradexautomotive.com

数量は多】 僕のヒーローアカデミア 34冊 他 1-30巻 少年漫画 - store

完売】 僕のヒーローアカデミア 1〜38巻 全巻セット まとめ売り 漫画

ヒロアカ51冊全巻34巻DVDCD付特装版関連本17冊映画僕のヒーロー

完売】 僕のヒーローアカデミア 1〜38巻 全巻セット まとめ売り 漫画

ホットセール 僕のヒーローアカデミア 1巻〜37巻 ほぼ全巻セット 【7

お1人様1点限り】 僕のヒーローアカデミア 1〜37巻セット+ポスト

最安値 僕のヒーローアカデミア 37巻 1巻から34巻 少年漫画 - lotnet.com

ヒロアカ51冊全巻34巻DVDCD付特装版関連本17冊映画僕のヒーロー

僕のヒーローアカデミア 34 - 堀越耕平 - 漫画・無料試し読みなら

ヒロアカ51冊全巻34巻DVDCD付特装版関連本17冊映画僕のヒーローアカデミア

新入荷 文豪ストレイドッグス レンタル落ち 漫画 関連本7冊 + 全巻

BLEACH 1~73巻+関連本7冊 - 少年漫画

有名ブランド 僕のヒーローアカデミア 1〜36巻 全巻初版 帯 チラシあり

最新入荷】 【コミック】進撃の巨人 全34巻+関連本8冊 諫山創 ◇全巻

ヒロアカ51冊全巻34巻DVDCD付特装版関連本17冊映画僕の

最大64%OFFクーポン 僕のヒーローアカデミア 1-30巻 他 34冊 kead.al

予約販売】本 僕のヒーローアカデミア34巻セット 少年漫画 - lotnet.com

お買い得!】 風雲児たち幕末編 全巻1から34巻 みなもと太郎 全巻

僕のヒーローアカデミア 1〜34巻 全巻 (内 新品15冊) + 映画特典 vol

ファッション 【即日発送】僕のヒーローアカデミア 全巻 関連本4冊付き

ヒロアカ51冊全巻34巻DVDCD付特装版関連本17冊映画僕の

☆大人気商品☆ 僕のヒーローアカデミア 全巻 36巻まで 映画特典2冊

肌触りがいい ドラゴンボール完全版全巻セット⭐️ドラゴンボール全巻

新しいエルメス ハートカクテル1~10、他7冊 青年漫画

ヒロアカ51冊全巻34巻DVDCD付特装版関連本17冊映画僕のヒーロー

在庫限り】 僕のヒーローアカデミア1~34巻+1冊 その他 - store

お1人様1点限り】 最新201巻付きこち亀全巻 1〜200巻セット 関連書籍6

ヒロアカ51冊全巻34巻DVDCD付特装版関連本17冊映画僕の

僕のヒーローアカデミア 1-34巻セット 【在庫有】 htckl.water.gov.my

華麗 【7冊セット】デキる猫は今日も憂鬱 1巻2巻3巻4巻5巻6巻7巻 全巻

2023年最新】ヤフオク! -ヒロアカ(漫画、コミック)の中古品・新品

特価商品 ☆ヒロアカ大量まとめセット☆僕のヒーローアカデミア 1〜34

商品の情報

メルカリ安心への取り組み

お金は事務局に支払われ、評価後に振り込まれます

出品者

スピード発送

この出品者は平均24時間以内に発送しています